RAG高級優化:基于問題生成的文檔檢索增強 原創

我們將在本文中介紹一種文本增強技術,該技術利用額外的問題生成來改進矢量數據庫中的文檔檢索。通過生成和合并與每個文本片段相關的問題,增強系統標準檢索過程,從而增加了找到相關文檔的可能性,這些文檔可以用作生成式問答的上下文。

實現步驟

通過用相關問題豐富文本片段,我們的目標是顯著提高識別文檔中包含用戶查詢答案的最相關部分的準確性。具體的方案實現一般包含以下步驟:

- 文檔解析和文本分塊:處理PDF文檔并將其劃分為可管理的文本片段。

- 問題增強:使用語言模型在文檔和片段級別生成相關問題。

- 矢量存儲創建:使用??向量模型?計算文檔的嵌入,并創建FAISS矢量存儲。

- 檢索和答案生成:使用FAISS查找最相關的文檔,并根據提供的上下文生成答案。

我們可以通過設置,指定在文檔級或片段級進行問題增強。

class QuestionGeneration(Enum):

"""

Enum class to specify the level of question generation for document processing.

Attributes:

DOCUMENT_LEVEL (int): Represents question generation at the entire document level.

FRAGMENT_LEVEL (int): Represents question generation at the individual text fragment level.

"""

DOCUMENT_LEVEL = 1

FRAGMENT_LEVEL = 2

方案實現

問題生成

def generate_questions(text: str) -> List[str]:

"""

Generates a list of questions based on the provided text using OpenAI.

Args:

text (str): The context data from which questions are generated.

Returns:

List[str]: A list of unique, filtered questions.

"""

llm = ChatOpenAI(model="gpt-4o-mini", temperature=0)

prompt = PromptTemplate(

input_variables=["context", "num_questions"],

template="Using the context data: {context}\n\nGenerate a list of at least {num_questions} "

"possible questions that can be asked about this context. Ensure the questions are "

"directly answerable within the context and do not include any answers or headers. "

"Separate the questions with a new line character."

)

chain = prompt | llm.with_structured_output(QuestionList)

input_data = {"context": text, "num_questions": QUESTIONS_PER_DOCUMENT}

result = chain.invoke(input_data)

# Extract the list of questions from the QuestionList object

questions = result.question_list

filtered_questions = clean_and_filter_questions(questions)

return list(set(filtered_questions))處理主流程

def process_documents(content: str, embedding_model: OpenAIEmbeddings):

"""

Process the document content, split it into fragments, generate questions,

create a FAISS vector store, and return a retriever.

Args:

content (str): The content of the document to process.

embedding_model (OpenAIEmbeddings): The embedding model to use for vectorization.

Returns:

VectorStoreRetriever: A retriever for the most relevant FAISS document.

"""

# Split the whole text content into text documents

text_documents = split_document(content, DOCUMENT_MAX_TOKENS, DOCUMENT_OVERLAP_TOKENS)

print(f'Text content split into: {len(text_documents)} documents')

documents = []

counter = 0

for i, text_document in enumerate(text_documents):

text_fragments = split_document(text_document, FRAGMENT_MAX_TOKENS, FRAGMENT_OVERLAP_TOKENS)

print(f'Text document {i} - split into: {len(text_fragments)} fragments')

for j, text_fragment in enumerate(text_fragments):

documents.append(Document(

page_cnotallow=text_fragment,

metadata={"type": "ORIGINAL", "index": counter, "text": text_document}

))

counter += 1

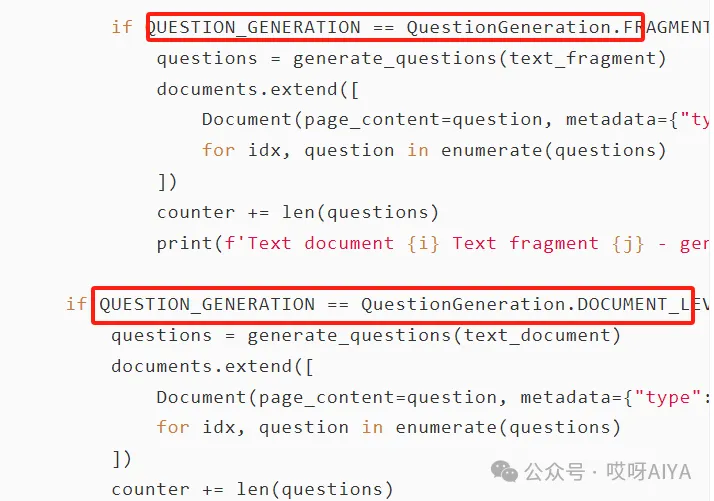

if QUESTION_GENERATION == QuestionGeneration.FRAGMENT_LEVEL:

questions = generate_questions(text_fragment)

documents.extend([

Document(page_cnotallow=question, metadata={"type": "AUGMENTED", "index": counter + idx, "text": text_document})

for idx, question in enumerate(questions)

])

counter += len(questions)

print(f'Text document {i} Text fragment {j} - generated: {len(questions)} questions')

if QUESTION_GENERATION == QuestionGeneration.DOCUMENT_LEVEL:

questions = generate_questions(text_document)

documents.extend([

Document(page_cnotallow=question, metadata={"type": "AUGMENTED", "index": counter + idx, "text": text_document})

for idx, question in enumerate(questions)

])

counter += len(questions)

print(f'Text document {i} - generated: {len(questions)} questions')

for document in documents:

print_document("Dataset", document)

print(f'Creating store, calculating embeddings for {len(documents)} FAISS documents')

vectorstore = FAISS.from_documents(documents, embedding_model)

print("Creating retriever returning the most relevant FAISS document")

return vectorstore.as_retriever(search_kwargs={"k": 1})該技術為提高基于向量的文檔檢索系統的信息檢索質量提供了一種方法。此實現使用了大模型的API,這可能會根據使用情況產生成本。

本文轉載自公眾號哎呀AIYA

原文鏈接:??https://mp.weixin.qq.com/s/bjI02uOeAGXSelCApb0yOQ??

?著作權歸作者所有,如需轉載,請注明出處,否則將追究法律責任

標簽

已于2024-9-14 14:18:55修改

贊

收藏

回復

分享

微博

QQ

微信

舉報

回復

相關推薦