六個步驟搞定云原生應用監控和告警

云原生系統搭建完畢之后,要建立可觀測性和告警,有利于了解整個系統的運行狀況。基于Prometheus搭建的云原生監控和告警是業內常用解決方案,每個云原生參與者都需要了解。

本文主要以springboot應用為例,講解云原生應用監控和告警的實操,對于理論知識講解不多。等朋友們把實操都理順之后,再補充理論知識,就更容易理解整個體系了。

1、監控告警技術選型

kubernetes集群非常復雜,有容器基礎資源指標、k8s集群Node指標、集群里的業務應用指標等等。面對大量需要監控的指標,傳統監控方案Zabbix對于云原生監控的支持不是很好。

所以需要使用更適合云原生的監控告警方案prometheus,prometheus和云原生是密不可分的,并且prometheus現已成為云原生生態中監控的事實標準。下面來一步步搭建基于prometheus的監控告警方案。

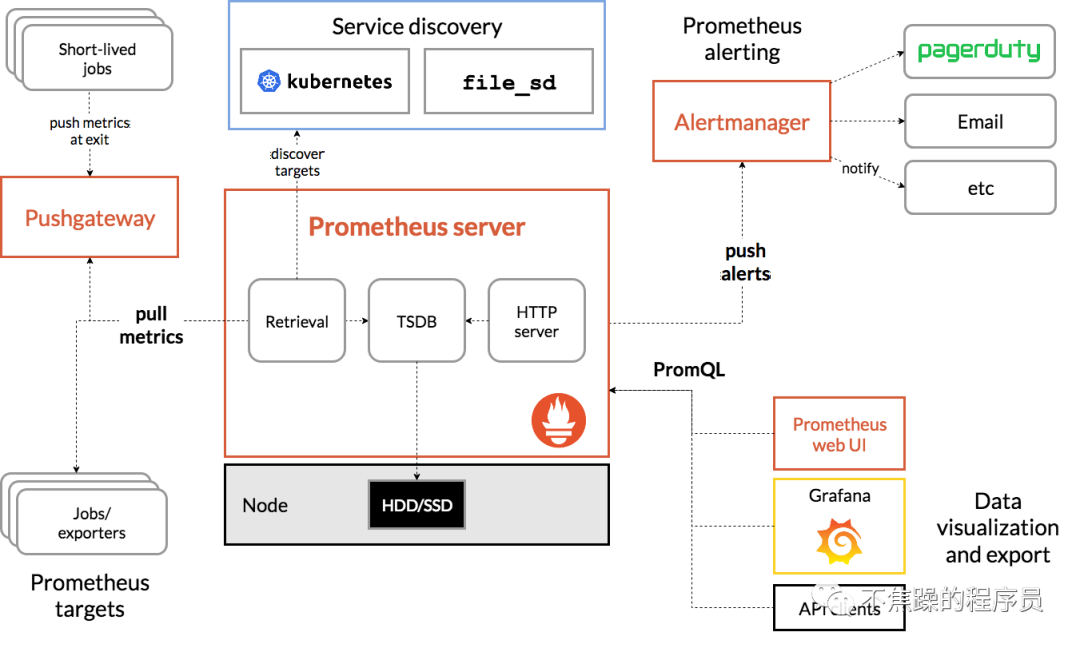

prometheus的基本原理是:主動去**被監控的系統**拉取各項指標,然后匯總存入到自身的時序數據庫,最后再通過圖表展示出來,或者是根據告警規則觸發告警。被監控的系統要主動暴露接口給prometheus去抓取指標。流程圖如下:

2、前置準備

本文的操作前提是:需要安裝好docker、kubernetes,在K8S集群里部署好一個springboot應用。

假設K8S集群有4個節點,分別是:k8s-master(10.20.1.21)、k8s-worker-1(10.20.1.22)、k8s-worker-2(10.20.1.23)、k8s-worker-3(10.20.1.24)。

3、安裝Prometheus

3.1、在k8s-master節點創建命名空間

kubectl create ns monitoring3.2、準備configmap文件

準備configmap文件prometheus-config.yaml,yaml文件中暫時只配置了對于prometheus本身指標的抓取任務。下文會繼續補充這個yaml文件:

apiVersion: v1

kind: ConfigMap

metadata:

name: prometheus-config

namespace: monitoring

data:

prometheus.yml: |

global:

scrape_interval: 15s

scrape_timeout: 15s

scrape_configs:

- job_name: 'prometheus'

static_configs:

- targets: ['localhost:9090']3.3、創建configmap

kubectl apply -f prometheus-config.yaml3.4、準備prometheus的部署文件

準備prometheus的部署文件prometheus-deploy.yaml

apiVersion: apps/v1

kind: Deployment

metadata:

name: prometheus

namespace: monitoring

labels:

app: prometheus

spec:

selector:

matchLabels:

app: prometheus

template:

metadata:

labels:

app: prometheus

spec:

serviceAccountName: prometheus

containers:

- image: prom/prometheus:v2.31.1

name: prometheus

securityContext:

runAsUser: 0

args:

- "--config.file=/etc/prometheus/prometheus.yml"

- "--storage.tsdb.path=/prometheus" # 指定tsdb數據路徑

- "--storage.tsdb.retention.time=24h"

- "--web.enable-admin-api" # 控制對admin HTTP API的訪問,其中包括刪除時間序列等功能

- "--web.enable-lifecycle" # 支持熱更新,直接執行localhost:9090/-/reload立即生效

ports:

- containerPort: 9090

name: http

volumeMounts:

- mountPath: "/etc/prometheus"

name: config-volume

- mountPath: "/prometheus"

name: data

resources:

requests:

cpu: 200m

memory: 1024Mi

limits:

cpu: 200m

memory: 1024Mi

- image: jimmidyson/configmap-reload:v0.4.0 #prometheus配置動態加載

name: prometheus-reload

securityContext:

runAsUser: 0

args:

- "--volume-dir=/etc/config"

- "--webhook-url=http://localhost:9090/-/reload"

volumeMounts:

- mountPath: "/etc/config"

name: config-volume

resources:

requests:

cpu: 100m

memory: 50Mi

limits:

cpu: 100m

memory: 50Mi

volumes:

- name: data

persistentVolumeClaim:

claimName: prometheus-data

- configMap:

name: prometheus-config

name: config-volume3.5、準備prometheus的存儲文件

準備prometheus的存儲文件prometheus-storage.yaml

apiVersion: storage.k8s.io/v1

kind: StorageClass

metadata:

name: local-storage

provisioner: kubernetes.io/no-provisioner

volumeBindingMode: WaitForFirstConsumer

---

apiVersion: v1

kind: PersistentVolume

metadata:

name: prometheus-local

labels:

app: prometheus

spec:

accessModes:

- ReadWriteOnce

capacity:

storage: 20Gi

storageClassName: local-storage

local:

path: /data/k8s/prometheus #確保該節點上存在此目錄

persistentVolumeReclaimPolicy: Retain

nodeAffinity:

required:

nodeSelectorTerms:

- matchExpressions:

- key: kubernetes.io/hostname

operator: In

values:

- k8s-worker-2

---

apiVersion: v1

kind: PersistentVolumeClaim

metadata:

name: prometheus-data

namespace: monitoring

spec:

selector:

matchLabels:

app: prometheus

accessModes:

- ReadWriteOnce

resources:

requests:

storage: 20Gi

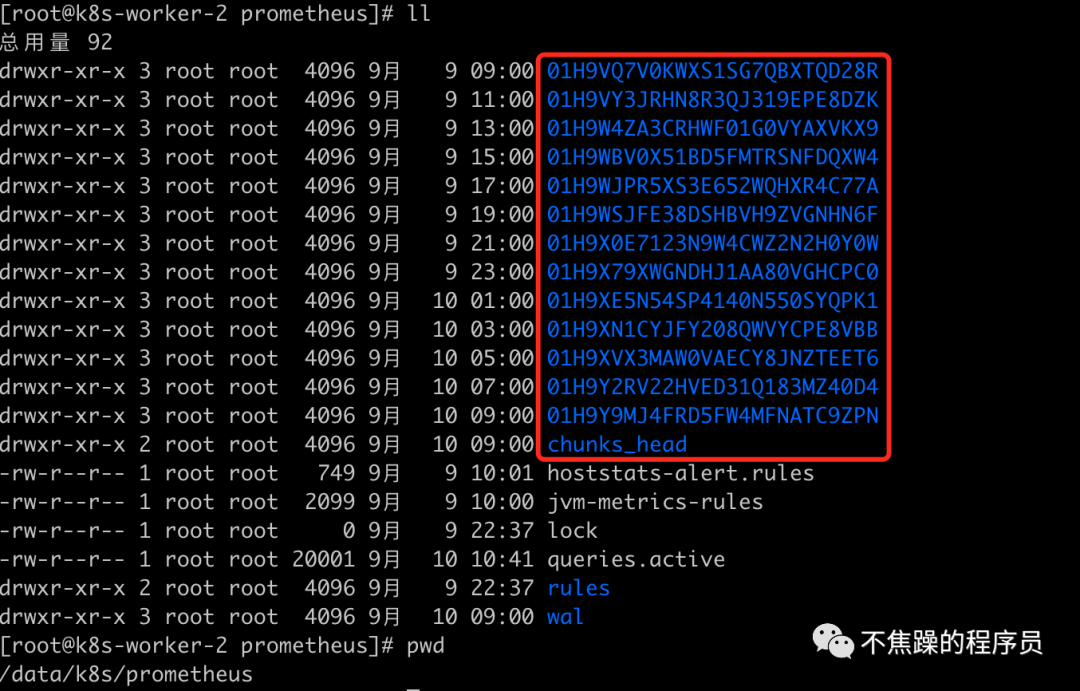

storageClassName: local-storage這里我使用的是k8s-worker-2節點作為存儲資源,讀者們使用時要改成自己的節點名稱,同時要在對應的節點下創建目錄:/data/k8s/prometheus。最終時序數據庫的數據會存儲到此目錄下,見下圖:

上面的yaml中用到了pv、pvc、storageclass存儲相關的知識,后面寫篇文章講解下,這里簡單介紹下:pv、pvc、storageclass主要是為pod自動創建存儲資源相關的組件。

3.6、創建存儲資源

kubectl apply -f prometheus-storage.yaml3.7、準備用戶、角色、權限相關文件

準備用戶、角色、權限相關文件prometheus-rbac.yaml:

apiVersion: v1

kind: ServiceAccount

metadata:

name: prometheus

namespace: monitoring

---

apiVersion: rbac.authorization.k8s.io/v1

kind: ClusterRole

metadata:

name: prometheus

rules:

- apiGroups:

- ""

resources:

- nodes

- services

- endpoints

- pods

- nodes/proxy

verbs:

- get

- list

- watch

- apiGroups:

- "extensions"

resources:

- ingresses

verbs:

- get

- list

- watch

- apiGroups:

- ""

resources:

- configmaps

- nodes/metrics

verbs:

- get

- nonResourceURLs:

- /metrics

verbs:

- get

---

apiVersion: rbac.authorization.k8s.io/v1

kind: ClusterRoleBinding

metadata:

name: prometheus

roleRef:

apiGroup: rbac.authorization.k8s.io

kind: ClusterRole

name: prometheus

subjects:

- kind: ServiceAccount

name: prometheus

namespace: monitoring

3.8、創建RBAC資源

kubectl apply -f prometheus-rbac.yaml3.9、創建deployment資源

kubectl apply -f prometheus-deploy.yaml3.10、準備service資源對象文件

準備service資源對象文件prometheus-svc.yaml。采用NortPort方式,供外部訪問prometheus:

apiVersion: v1

kind: Service

metadata:

name: prometheus

namespace: monitoring

labels:

app: prometheus

spec:

selector:

app: prometheus

type: NodePort

ports:

- name: web

port: 9090

targetPort: http3.11、創建service對象:

kubectl apply -f prometheus-svc.yaml3.12、訪問prometheus

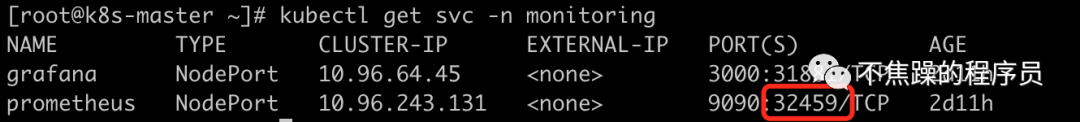

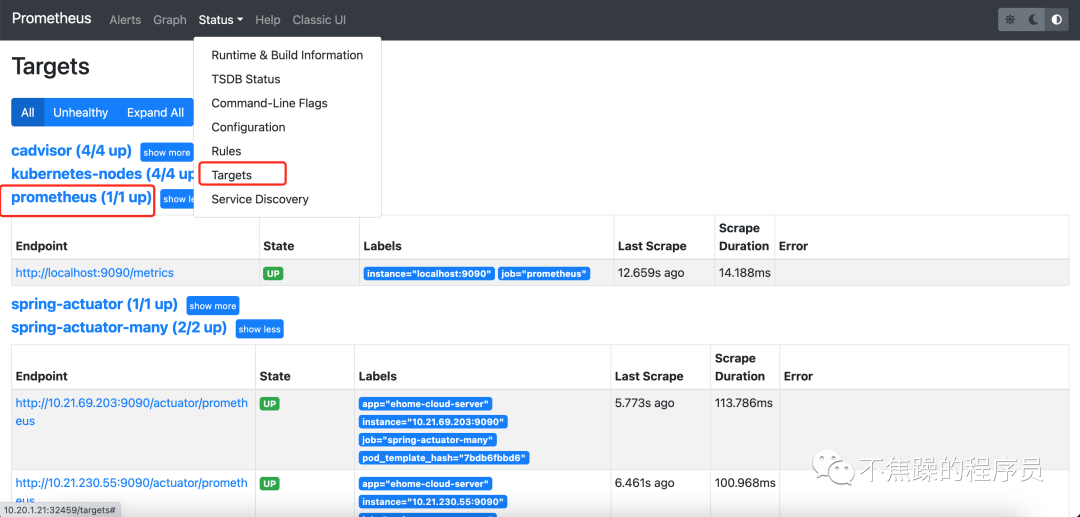

此時通過kubectl get svc -n monitoring獲取暴露的端口號,通過K8S集群的任意節點+端口號就可以訪問prometheus了。比如通過http://10.20.1.21:32459/訪問,可以看到如下界面,通過targets可以看到上面prometheus-config.yaml文件中配置的被抓取對象:

至此prometheus安裝完畢,下面繼續安裝grafana。

4、安裝Grafana

prometheus的圖表功能比較弱,一般使用grafana來展示prometheus的數據,下面開始安裝grafana。

4.1、準備grafana部署文件

準備grafana部署文件grafana-deploy.yaml,這是一個all-in-one的文件,將Deployment、Service、PV、PVC的編排全部卸載該文件中:

apiVersion: apps/v1

kind: Deployment

metadata:

name: grafana

namespace: monitoring

spec:

selector:

matchLabels:

app: grafana

template:

metadata:

labels:

app: grafana

spec:

volumes:

- name: storage

persistentVolumeClaim:

claimName: grafana-data

containers:

- name: grafana

image: grafana/grafana:8.3.3

imagePullPolicy: IfNotPresent

securityContext:

runAsUser: 0

ports:

- containerPort: 3000

name: grafana

env:

- name: GF_SECURITY_ADMIN_USER

value: admin

- name: GF_SECURITY_ADMIN_PASSWORD

value: admin

readinessProbe:

failureThreshold: 10

httpGet:

path: /api/health

port: 3000

scheme: HTTP

initialDelaySeconds: 60

periodSeconds: 10

successThreshold: 1

timeoutSeconds: 30

livenessProbe:

failureThreshold: 3

httpGet:

path: /api/health

port: 3000

scheme: HTTP

periodSeconds: 10

successThreshold: 1

timeoutSeconds: 1

resources:

limits:

cpu: 400m

memory: 1024Mi

requests:

cpu: 200m

memory: 512Mi

volumeMounts:

- mountPath: /var/lib/grafana

name: storage

---

apiVersion: v1

kind: Service

metadata:

name: grafana

namespace: monitoring

spec:

type: NodePort

ports:

- port: 3000

selector:

app: grafana

---

apiVersion: v1

kind: PersistentVolume

metadata:

name: grafana-local

labels:

app: grafana

spec:

accessModes:

- ReadWriteOnce

capacity:

storage: 1Gi

storageClassName: local-storage

local:

path: /data/k8s/grafana #保證節點上創建好該目錄

persistentVolumeReclaimPolicy: Retain

nodeAffinity:

required:

nodeSelectorTerms:

- matchExpressions:

- key: kubernetes.io/hostname

operator: In

values:

- k8s-worker-2

---

apiVersion: v1

kind: PersistentVolumeClaim

metadata:

name: grafana-data

namespace: monitoring

spec:

selector:

matchLabels:

app: grafana

accessModes:

- ReadWriteOnce

resources:

requests:

storage: 1Gi

storageClassName: local-storage上文中依舊用到了PV、PVC、StorageClass的知識,節點親和選擇了k8s-worker-2節點,同時需要在該節點上創建改目錄/data/k8s/grafana。

4.2、部署grafana資源

kubectl apply -f grafana-deploy.yaml4.3、訪問grafana

查看對應的service端口映射:

通過鏈接http://10.20.1.21:31881/訪問grafana,通過配置文件中的用戶名和密碼訪問grafana,再導入prometheus的數據源:

5、配置數據抓取

5.1、配置抓取node數據

在抓取數據之前,需要在node節點上配置node-exporter,這樣prometheus才能通過node-exporter暴露的接口抓取數據。

5.1.1、準備node-exporter的部署文件

準備node-exporter的部署文件node-exporter-daemonset.yaml:

apiVersion: apps/v1

kind: DaemonSet

metadata:

name: node-exporter

namespace: kube-system

labels:

app: node-exporter

spec:

selector:

matchLabels:

app: node-exporter

template:

metadata:

labels:

app: node-exporter

spec:

hostPID: true

hostIPC: true

hostNetwork: true

nodeSelector:

kubernetes.io/os: linux

containers:

- name: node-exporter

image: prom/node-exporter:v1.3.1

args:

- --web.listen-address=$(HOSTIP):9100

- --path.procfs=/host/proc

- --path.sysfs=/host/sys

- --path.rootfs=/host/root

- --no-collector.hwmon # 禁用不需要的一些采集器

- --no-collector.nfs

- --no-collector.nfsd

- --no-collector.nvme

- --no-collector.dmi

- --no-collector.arp

- --collector.filesystem.ignored-mount-points=^/(dev|proc|sys|var/lib/containerd/.+|/var/lib/docker/.+|var/lib/kubelet/pods/.+)($|/)

- --collector.filesystem.ignored-fs-types=^(autofs|binfmt_misc|cgroup|configfs|debugfs|devpts|devtmpfs|fusectl|hugetlbfs|mqueue|overlay|proc|procfs|pstore|rpc_pipefs|securityfs|sysfs|tracefs)$

ports:

- containerPort: 9100

env:

- name: HOSTIP

valueFrom:

fieldRef:

fieldPath: status.hostIP

resources:

requests:

cpu: 150m

memory: 200Mi

limits:

cpu: 300m

memory: 400Mi

securityContext:

runAsNonRoot: true

runAsUser: 65534

volumeMounts:

- name: proc

mountPath: /host/proc

- name: sys

mountPath: /host/sys

- name: root

mountPath: /host/root

mountPropagation: HostToContainer

readOnly: true

tolerations: # 添加容忍

- operator: "Exists"

volumes:

- name: proc

hostPath:

path: /proc

- name: dev

hostPath:

path: /dev

- name: sys

hostPath:

path: /sys

- name: root

hostPath:

path: /5.1.2、部署node-exporter

kubectl apply -f node-exporter-daemonset.yaml5.1.3、prometheus接入抓取數據

在之前的prometheus-config.yaml文件中繼續增加job-name,如下:

- job_name: kubernetes-nodes

kubernetes_sd_configs:

- role: node

relabel_configs:

- source_labels: [__address__]

regex: '(.*):10250'

replacement: '${1}:9100'

target_label: __address__

action: replace

- action: labelmap

regex: __meta_kubernetes_node_label_(.+)完整的prometheus-config.yaml見文末。

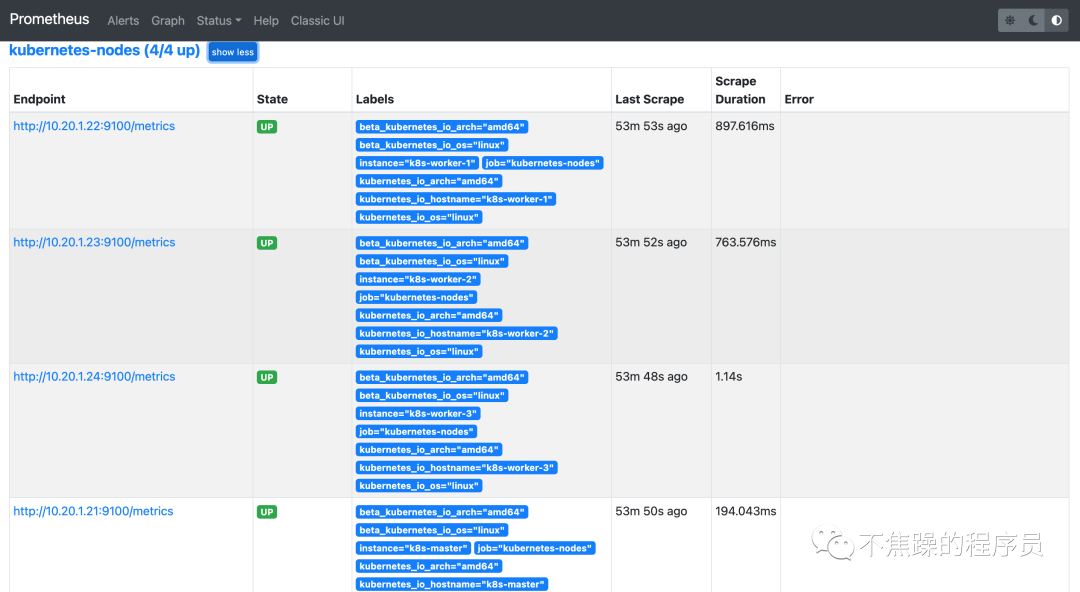

prometheus-config.yaml文件修改完,稍等一會兒就可以看到頁面多了幾個target,如下圖所示,這些都是被prometheus監控的對象:

5.2、配置抓取springboot actuator數據

5.2.1、配置springboot應用

- springboot應用增加pom

<dependency>

<groupId>org.springframework.boot</groupId>

<artifactId>spring-boot-starter-actuator</artifactId>

</dependency>

<dependency>

<groupId>io.micrometer</groupId>

<artifactId>micrometer-registry-prometheus</artifactId>

</dependency>- springboot應用配置properties文件:

management.endpoint.health.probes.enabled=true

management.health.probes.enabled=true

management.endpoint.health.enabled=true

management.endpoint.health.show-details=always

management.endpoints.web.exposure.include=*

management.endpoints.web.exposure.exclude=env,beans

management.endpoint.shutdown.enabled=true

management.server.port=9090- 查看指標鏈接

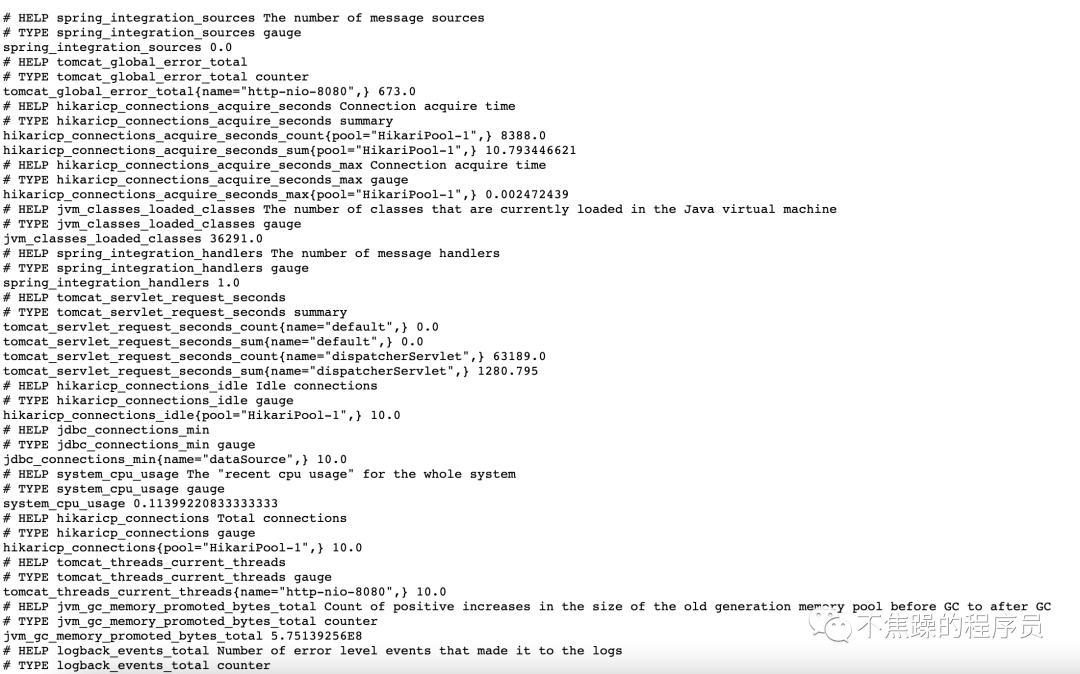

配置完之后,重新打鏡像部署到K8S集群,這里不做演示了。訪問應用的/actuator/prometheus鏈接得到如下結果,將系統的指標信息暴露出來:

5.2.2、prometheus接入抓取數據

繼續修改配置文件prometheus-config.yaml,如下:

- job_name: 'spring-actuator-many'

metrics_path: '/actuator/prometheus'

scrape_interval: 5s

kubernetes_sd_configs:

- role: pod

relabel_configs:

- source_labels: [__meta_kubernetes_namespace]

separator: ;

regex: 'test1'

target_label: namespace

action: keep

- source_labels: [__address__]

regex: '(.*):9090'

target_label: __address__

action: keep

- action: labelmap

regex: __meta_kubernetes_pod_label_(.+)配置文件中的大概意思是,選擇“端口是9090,namespace是test1”的pod資源進行監控。更多的語法,讀者自行查閱prometheus官網。

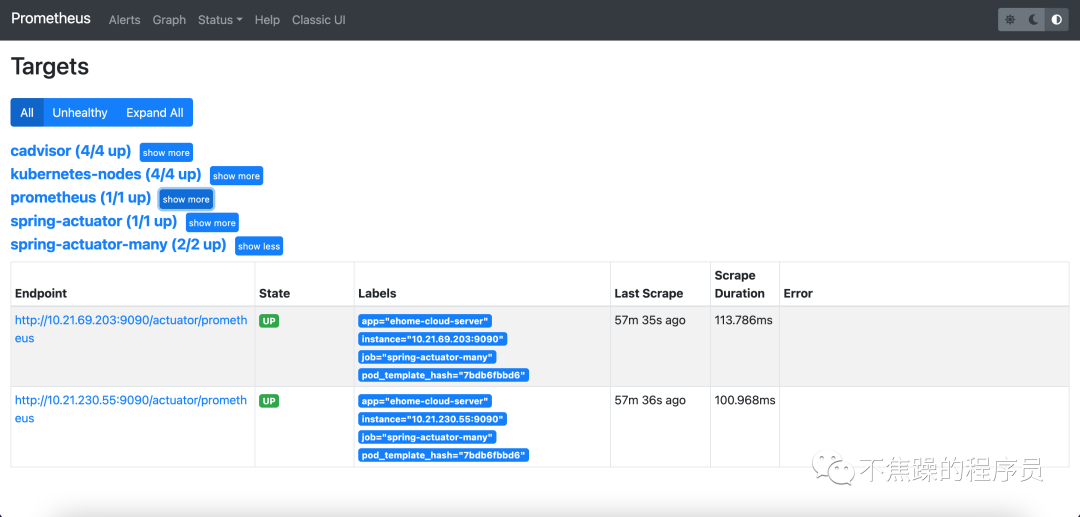

稍等片刻,可以看到多了springboot應用的監控目標:

6、配置監控圖表

指標數據都有了,接下來就是如何配置圖表了。grafana提供了豐富的圖表,可以在官網上自行選擇。下文繼續配置監控node的圖表 和 監控springboot應用的圖表。

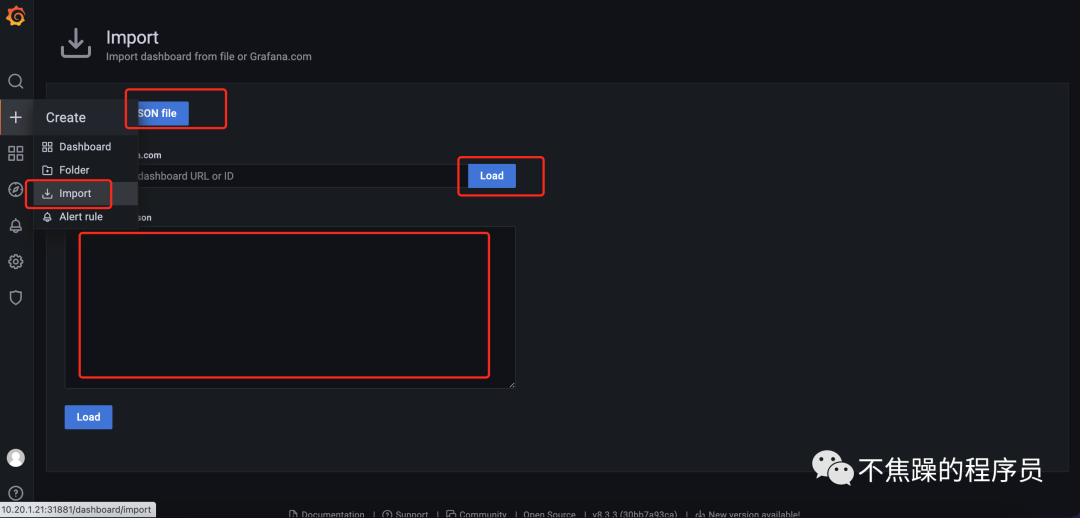

配置圖表有3種方式:json文件、輸入圖表id、輸入json內容。配置界面如下圖:

6.1、配置node監控圖表

在上圖的界面中選擇輸入圖表id的方式,輸入圖表id8919,即可看到如下界面:

6.2、配置springboot應用的圖表

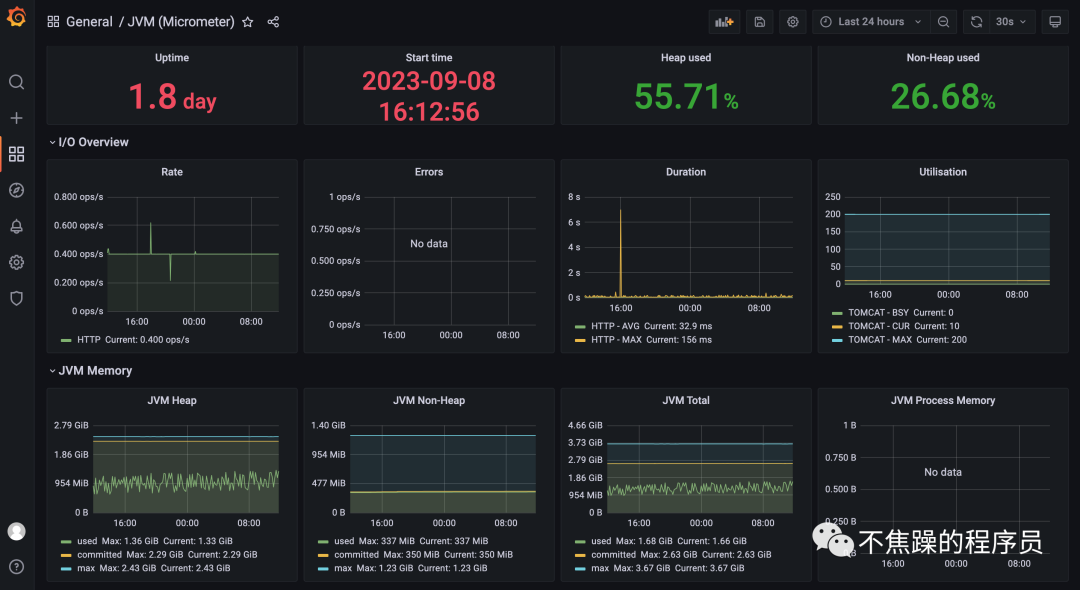

在上圖的界面中選擇輸入json內容的方式,輸入此鏈接下的json內容https://img.mangod.top/blog/13-6-jvm-micrometer.json,即可看到如下圖表:

至此k8s-node監控和springboot應用監控已經完成。如果還需要更多的監控,讀者需要自行查閱資料。

7、安裝告警alertmanager

監控完成之后,就是安裝告警組件alertmanager了。可以選擇在K8S集群下的任一節點使用docker安裝。

7.1、安裝alertmanager

7.1.1、拉取docker鏡像

docker pull prom/alertmanager:v0.25.07.1.2、創建報警配置文件

創建報警配置文件alertmanager.yml之前,需要在安裝alertmanager所在節點上創建目錄/data/prometheus/alertmanager,在目錄下創建文件alertmanager.yml,內容如下:

route:

group_by: ['alertname']

group_wait: 30s

group_interval: 5m

repeat_interval: 1h

receiver: 'mail_163'

global:

smtp_smarthost: 'smtp.qq.com:465'

smtp_from: '294931067@qq.com'

smtp_auth_username: '294931067@qq.com'

# 此處是發送郵件的授權碼,不是密碼

smtp_auth_password: '此處是授權碼,比如sdfasdfsdffsfa'

smtp_require_tls: false

receivers:

- name: 'mail_163'

email_configs:

- to: 'yclxiao@163.com'

send_resolved: true

inhibit_rules:

- source_match:

severity: 'critical'

target_match:

severity: 'warning'

equal: ['alertname', 'dev', 'instance']7.1.3、安裝啟動:

docker run --name alertmanager -d -p 9093:9093 -v

/data/prometheus/alertmanager:/etc/alertmanager prom/alertmanager:v0.25.07.1.4、訪問alertmanager

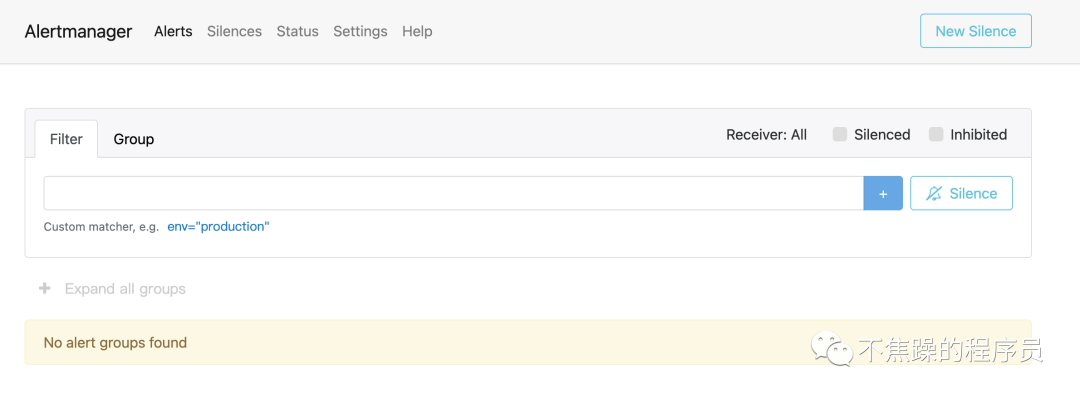

安裝完畢之后,通過如下鏈接訪問:http://10.20.1.21:9093/#/alerts,界面如下:

7.2、與prometheus關聯

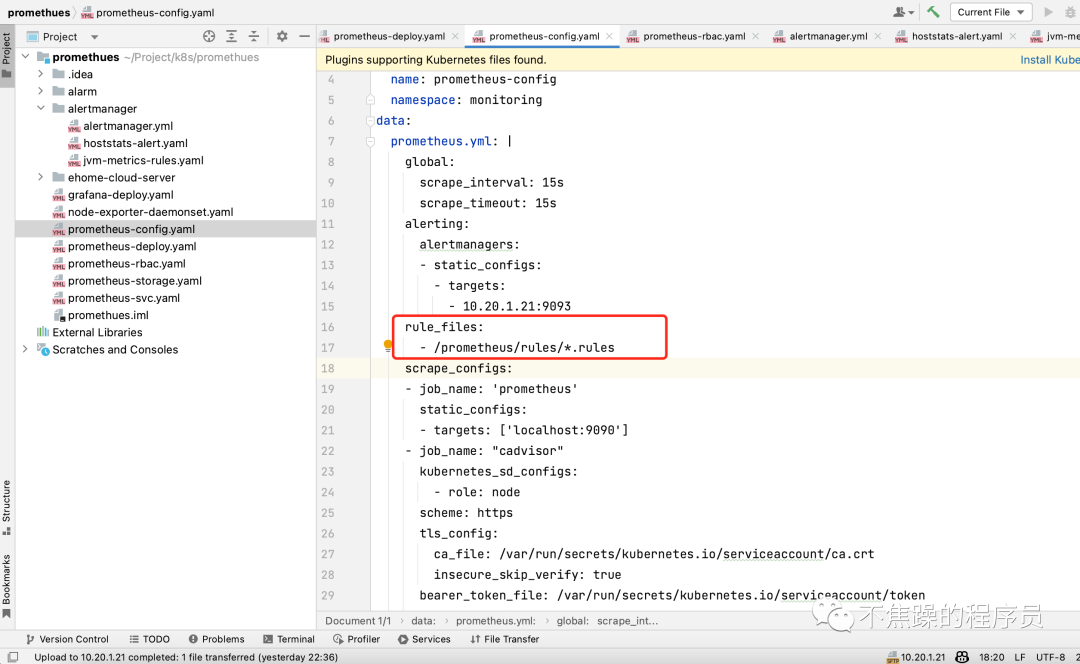

在prometheus-configmap.yaml文件中增加如下配置,即可讓prometheus與alertmanager關聯起來,配置中的地址改成自己的prometheus地址。

7.3、配置觸發告警規則

7.3.1、增加配置目錄

在prometheus-configmap.yaml文件中增加如下配置,即可增加觸發告警的規則:

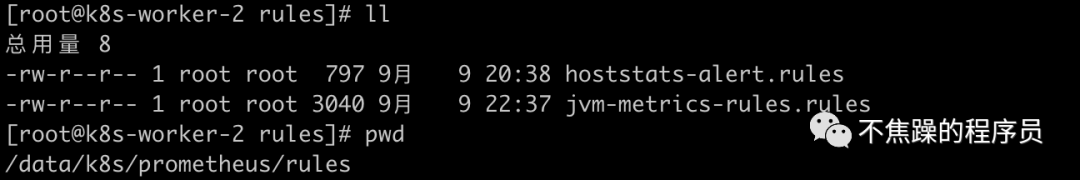

注意此處的文件目錄/prometheus/是prometheus所在存儲目錄,我這里是安裝在k8s-worker-2下,然后在prometheus的存儲目錄下建立/rules文件夾,如下圖:

至此prometheus-config.yaml全部配置完畢,最后附上完整的prometheus-config.yaml:

apiVersion: v1

kind: ConfigMap

metadata:

name: prometheus-config

namespace: monitoring

data:

prometheus.yml: |

global:

scrape_interval: 15s

scrape_timeout: 15s

alerting:

alertmanagers:

- static_configs:

- targets:

- 10.20.1.21:9093

rule_files:

- /prometheus/rules/*.rules

scrape_configs:

- job_name: 'prometheus'

static_configs:

- targets: ['localhost:9090']

- job_name: "cadvisor"

kubernetes_sd_configs:

- role: node

scheme: https

tls_config:

ca_file: /var/run/secrets/kubernetes.io/serviceaccount/ca.crt

insecure_skip_verify: true

bearer_token_file: /var/run/secrets/kubernetes.io/serviceaccount/token

relabel_configs:

- action: labelmap

regex: __meta_kubernetes_node_label_(.+)

replacement: $1

- replacement: /metrics/cadvisor # <nodeip>/metrics -> <nodeip>/metrics/cadvisor

target_label: __metrics_path__

- job_name: kubernetes-nodes

kubernetes_sd_configs:

- role: node

relabel_configs:

- source_labels: [__address__]

regex: '(.*):10250'

replacement: '${1}:9100'

target_label: __address__

action: replace

- action: labelmap

regex: __meta_kubernetes_node_label_(.+)

- job_name: 'spring-actuator-many'

metrics_path: '/actuator/prometheus'

scrape_interval: 5s

kubernetes_sd_configs:

- role: pod

relabel_configs:

- source_labels: [__meta_kubernetes_namespace]

separator: ;

regex: 'test1'

target_label: namespace

action: keep

- source_labels: [__address__]

regex: '(.*):9090'

target_label: __address__

action: keep

- action: labelmap

regex: __meta_kubernetes_pod_label_(.+)7.3.2、配置觸發告警規則

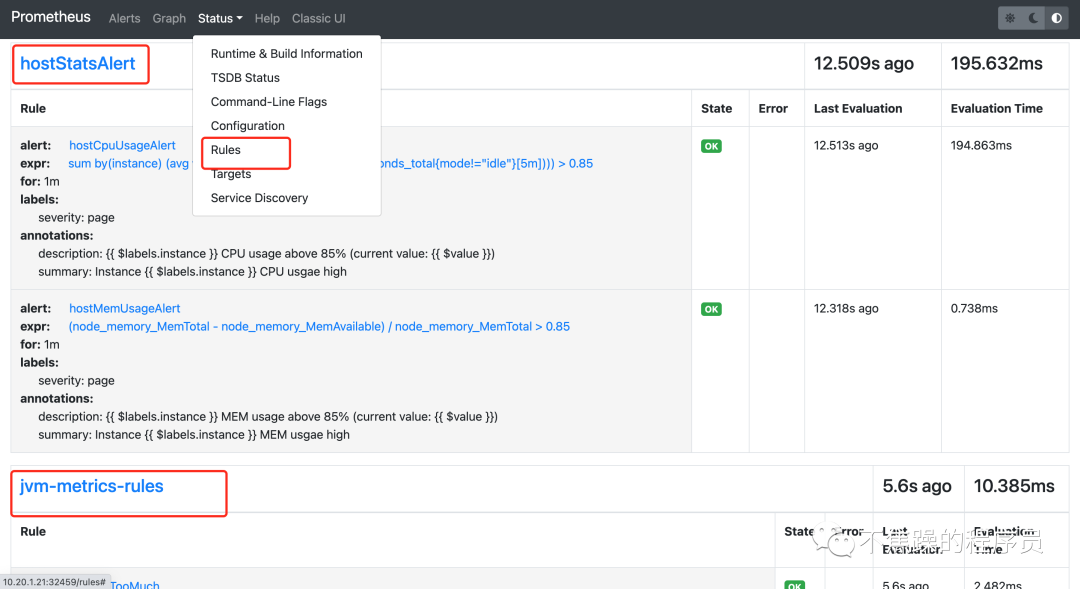

觸發告警規則的目錄已經定好了,接下來就是寫具體規則了,在目錄下創建2個觸發告警的規則文件,如上圖,文件中寫了觸發node節點告警規則和觸發springboot應用的告警規則,具體內容如下:

- node節點告警規則-hoststats-alert.yaml:

groups:

- name: hostStatsAlert

rules:

- alert: hostCpuUsageAlert

expr: sum(avg without (cpu)(irate(node_cpu_seconds_total{mode!='idle'}[5m]))) by (instance) > 0.85

for: 1m

labels:

severity: page

annotations:

summary: "Instance {{ $labels.instance }} CPU usgae high"

description: "{{ $labels.instance }} CPU usage above 85% (current value: {{ $value }})"

- alert: hostMemUsageAlert

expr: (node_memory_MemTotal - node_memory_MemAvailable)/node_memory_MemTotal > 0.85

for: 1m

labels:

severity: page

annotations:

summary: "Instance {{ $labels.instance }} MEM usgae high"

description: "{{ $labels.instance }} MEM usage above 85% (current value: {{ $value }})"- springboot應用告警規則-jvm-metrics-rules.yaml:

groups:

- name: jvm-metrics-rules

rules:

# 在5分鐘里,GC花費時間超過10%

- alert: GcTimeTooMuch

expr: increase(jvm_gc_collection_seconds_sum[5m]) > 30

for: 5m

labels:

severity: red

annotations:

summary: "{{ $labels.app }} GC時間占比超過10%"

message: "ns:{{ $labels.namespace }} pod:{{ $labels.pod }} GC時間占比超過10%,當前值({{ $value }}%)"

# GC次數太多

- alert: GcCountTooMuch

expr: increase(jvm_gc_collection_seconds_count[1m]) > 30

for: 1m

labels:

severity: red

annotations:

summary: "{{ $labels.app }} 1分鐘GC次數>30次"

message: "ns:{{ $labels.namespace }} pod:{{ $labels.pod }} 1分鐘GC次數>30次,當前值({{ $value }})"

# FGC次數太多

- alert: FgcCountTooMuch

expr: increase(jvm_gc_collection_seconds_count{gc="ConcurrentMarkSweep"}[1h]) > 3

for: 1m

labels:

severity: red

annotations:

summary: "{{ $labels.app }} 1小時的FGC次數>3次"

message: "ns:{{ $labels.namespace }} pod:{{ $labels.pod }} 1小時的FGC次數>3次,當前值({{ $value }})"

# 非堆內存使用超過80%

- alert: NonheapUsageTooMuch

expr: jvm_memory_bytes_used{job="spring-actuator-many", area="nonheap"} / jvm_memory_bytes_max * 100 > 80

for: 1m

labels:

severity: red

annotations:

summary: "{{ $labels.app }} 非堆內存使用>80%"

message: "ns:{{ $labels.namespace }} pod:{{ $labels.pod }} 非堆內存使用率>80%,當前值({{ $value }}%)"

# 內存使用預警

- alert: HeighMemUsage

expr: process_resident_memory_bytes{job="spring-actuator-many"} / os_total_physical_memory_bytes * 100 > 15

for: 1m

labels:

severity: red

annotations:

summary: "{{ $labels.app }} rss內存使用率大于85%"

message: "ns:{{ $labels.namespace }} pod:{{ $labels.pod }} rss內存使用率大于85%,當前值({{ $value }}%)"

# JVM高內存使用預警

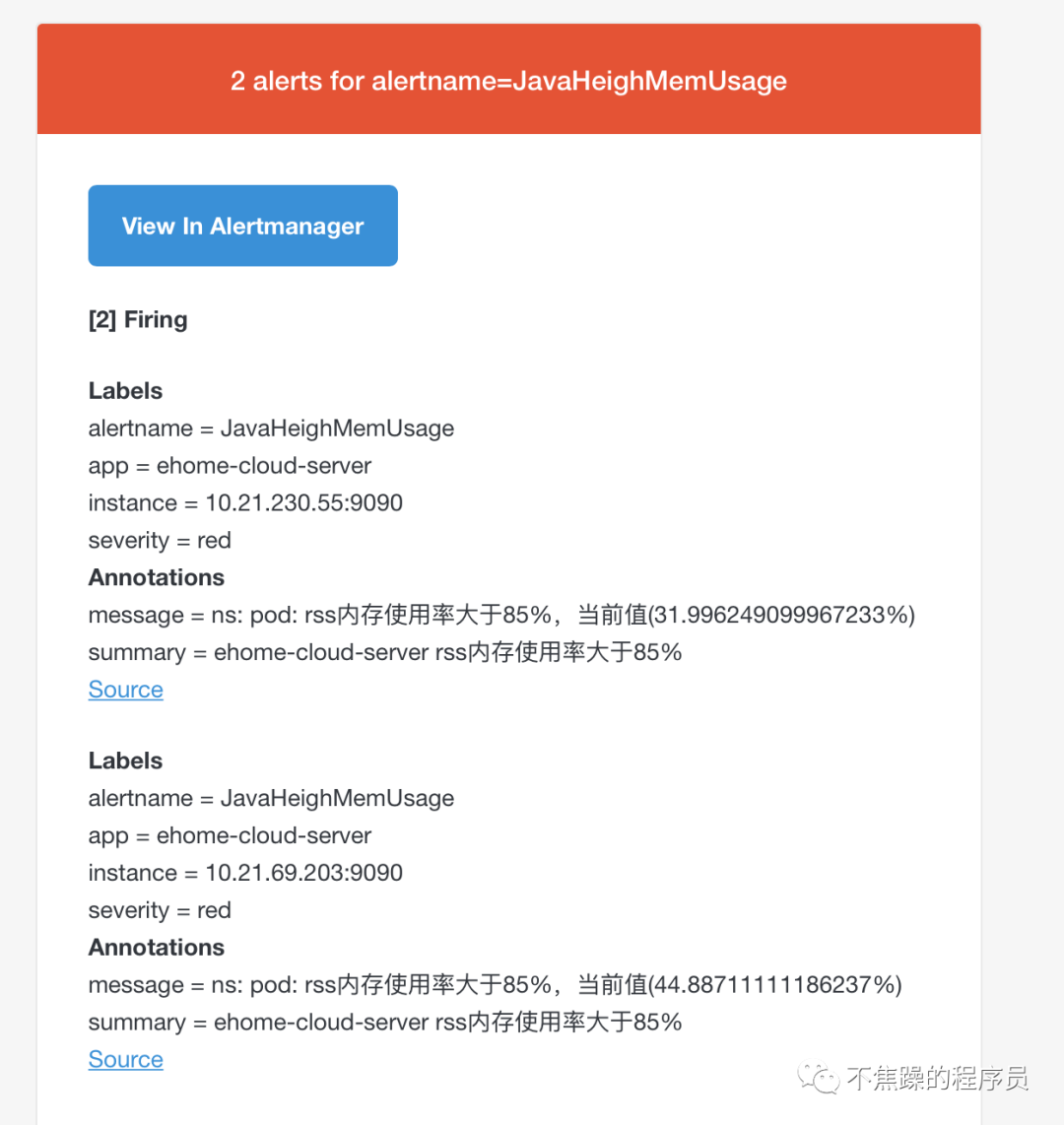

- alert: JavaHeighMemUsage

expr: sum(jvm_memory_used_bytes{area="heap",job="spring-actuator-many"}) by(app,instance) / sum(jvm_memory_max_bytes{area="heap",job="spring-actuator-many"}) by(app,instance) * 100 > 85

for: 1m

labels:

severity: red

annotations:

summary: "{{ $labels.app }} rss內存使用率大于85%"

message: "ns:{{ $labels.namespace }} pod:{{ $labels.pod }} rss內存使用率大于85%,當前值({{ $value }}%)"

# CPU使用預警

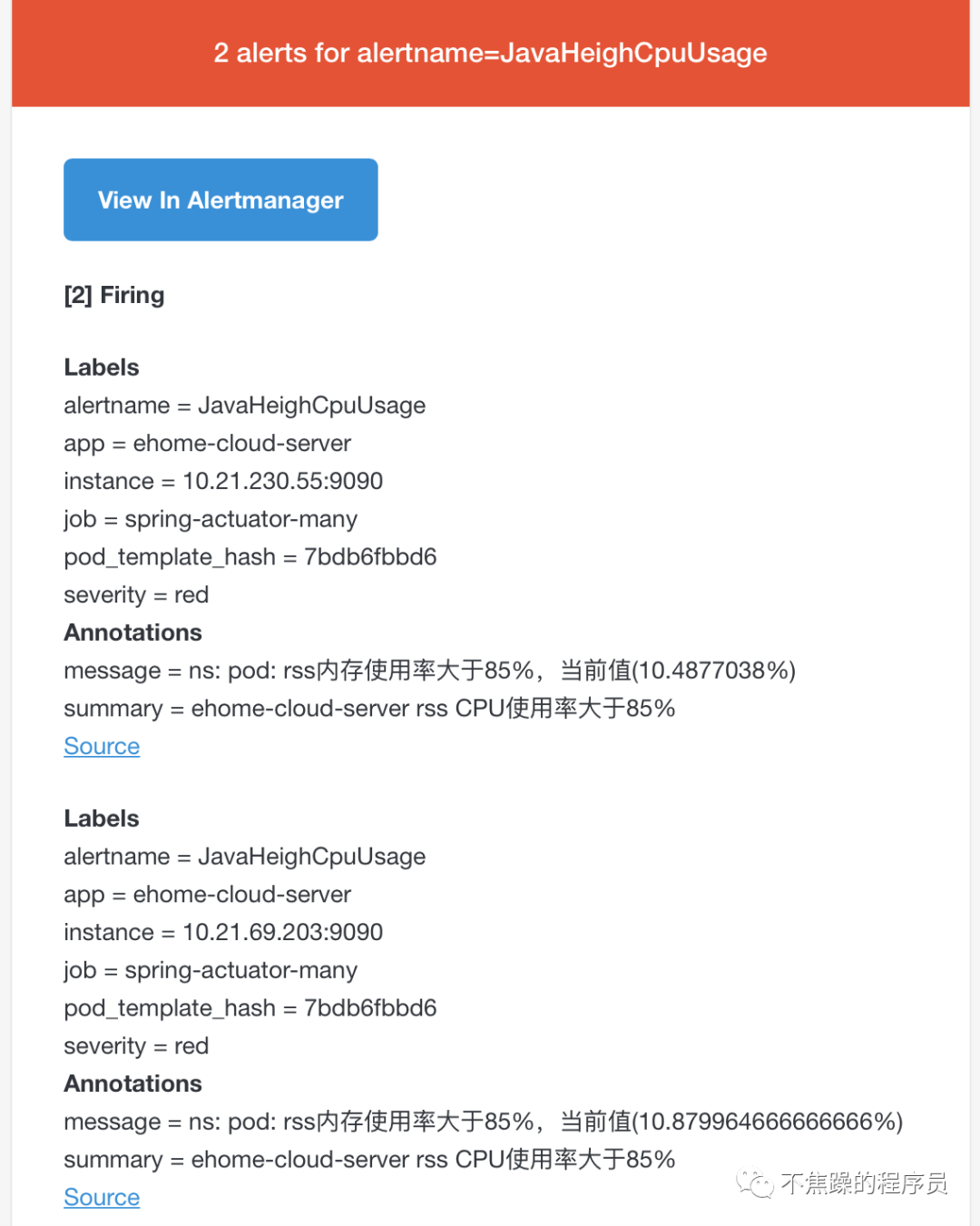

- alert: JavaHeighCpuUsage

expr: system_cpu_usage{job="spring-actuator-many"} * 100 > 85

for: 1m

labels:

severity: red

annotations:

summary: "{{ $labels.app }} rss CPU使用率大于85%"

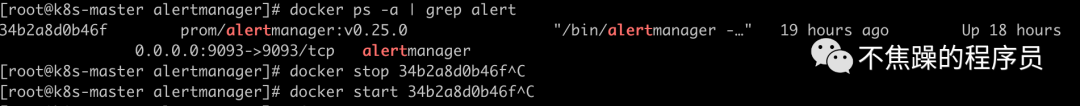

message: "ns:{{ $labels.namespace }} pod:{{ $labels.pod }} rss內存使用率大于85%,當前值({{ $value }}%)"- 告警文件準備好之后,先重啟alertmanager,再重啟prometheus:

kubectl delete -f prometheus-deploy.yamlkubectl apply -f

prometheus-deploy.yaml- 查看界面

此時查看alertmanager的status,可以看到如下界面:

此時查看promethetus的rules,可以看到如下界面:

7.3.3、注意點

改了alertmanager的告警配置要重啟alertmanager才生效。

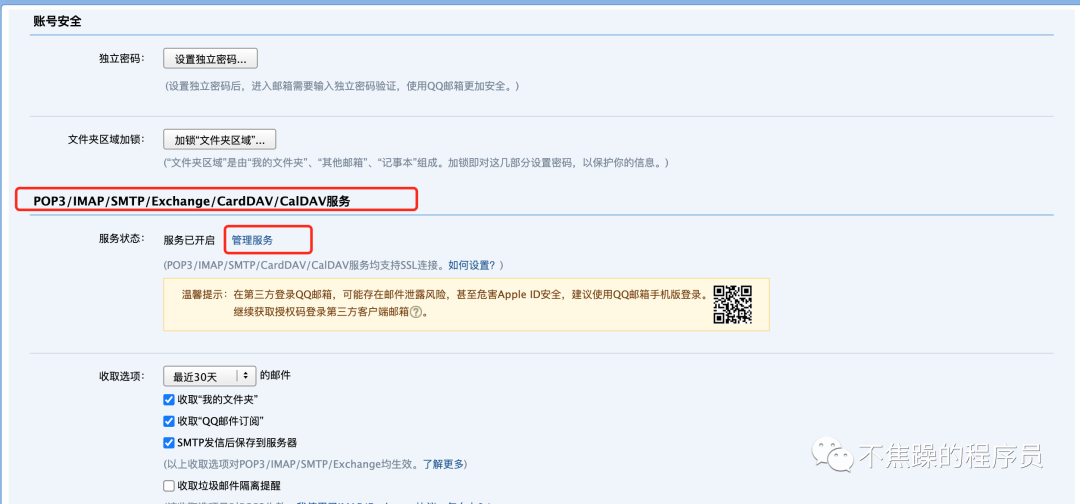

alertmanager.yml中的smtp_auth_password配置的是郵件發送的授權碼,不是郵箱密碼。郵箱的授權碼的配置如下圖,下圖以QQ郵箱為例:

至此基于Prometheus和Grafana的監控和告警已經安裝完畢。

8、測試告警

安裝完畢后,簡單測試下告警效果。有2種方式測試。

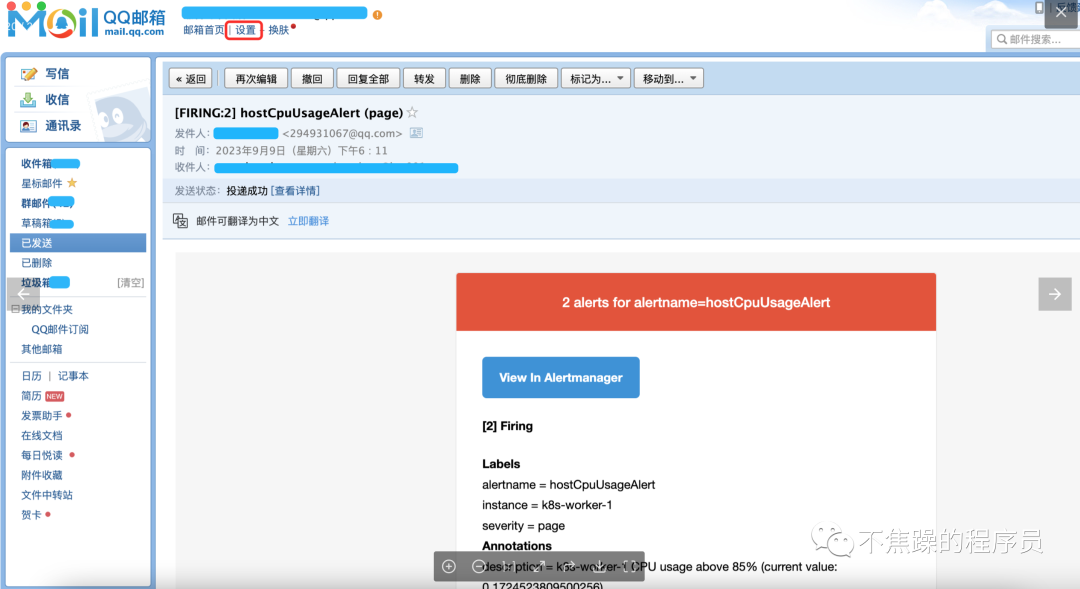

方式1:將告警規則值調低,會收到如下郵件:

方式2:通過命令cat /dev/zero>/dev/null拉高node節點的cpu或者拉高容器的cpu,,會收到如下郵件:

9、總結

本文主要講解基于Prometheus + Grafana的云原生應用監控和告警的實戰,助你快速搭建系統,希望對你有幫助!