深入理解GPU內存分配:機器學習工程師的實用指南與實驗

給定一個模型架構、數據類型、輸入形狀和優化器,你能否計算出前向傳播和反向傳播所需的GPU內存量?要回答這個問題,我們需要將流程分解為基本組件,并從底層理解內存需求。以下實驗(可以在Google Colab上運行)將幫助你理解核心概念。

預留與分配

PyTorch預留了更多內存,但只分配所需的內存。這樣做是為了在需要更多內存時能夠快速分配,而不是進行昂貴的預留操作。我們只關心內存分配,而不關心預留。

def test_reservation_vs_allocation():

print(f"Base memory reserved: {torch.cuda.memory_reserved(device_id)}")

print(f"Base memory allocated: {torch.cuda.memory_allocated(device_id)}")

# Allocate some memory

x = torch.randn((1024,), dtype=torch.float32, device=device)

print(f"Memory after allocation (reserved): {torch.cuda.memory_reserved(device_id)}")

print(f"Memory after allocation (allocated): {torch.cuda.memory_allocated(device_id)}")

# Cleanup

del x

print(f"Memory after cleanup (reserved): {torch.cuda.memory_reserved(device_id)}")

print(f"Memory after cleanup (allocated): {torch.cuda.memory_allocated(device_id)}")

torch.cuda.empty_cache()

print(f"Memory after empty_cache (reserved): {torch.cuda.memory_reserved(device_id)}")

print(f"Memory after empty_cache (allocated): {torch.cuda.memory_allocated(device_id)}")

"""

Output:

Base memory reserved: 0

Base memory allocated: 0

Memory after allocation (reserved): 2097152

Memory after allocation (allocated): 4096

Memory after cleanup (reserved): 2097152

Memory after cleanup (allocated): 0

Memory after empty_cache (reserved): 0

Memory after empty_cache (allocated): 0

"""當刪除變量x或當x超出作用域時,x的內存被釋放,但仍然為將來使用而預留。只有在調用torch.cuda.empty_cache()時,才會釋放預留的內存。

這里的torch.cuda.memory_allocated()將返回PyTorch在此進程上分配的內存。如果有另一個進程正在使用一些GPU內存,將返回0。為了獲取真實的GPU內存使用情況,可以使用以下函數。

import subprocess

def get_gpu_memory_used(gpu_id):

"""

Returns the amount of memory used on the specified GPU in bytes.

Parameters:

gpu_id (int): The ID of the GPU (e.g., 0 for "cuda:0", 1 for "cuda:1").

Returns:

int: The amount of memory used on the GPU in bytes.

"""

try:

# Run the nvidia-smi command to get memory usage

result = subprocess.run(

["nvidia-smi", "--query-gpu=memory.used", "--format=csv,nounits,noheader", f"--id={gpu_id}"],

stdout=subprocess.PIPE,

text=True

)

# Get the used memory in MiB from the result

used_memory_mib = int(result.stdout.strip())

# Convert MiB to bytes (1 MiB = 1024 * 1024 bytes)

used_memory_bytes = used_memory_mib * 1024 * 1024

return used_memory_bytes

except Exception as e:

print(f"Error occurred: {e}")

return None數據類型

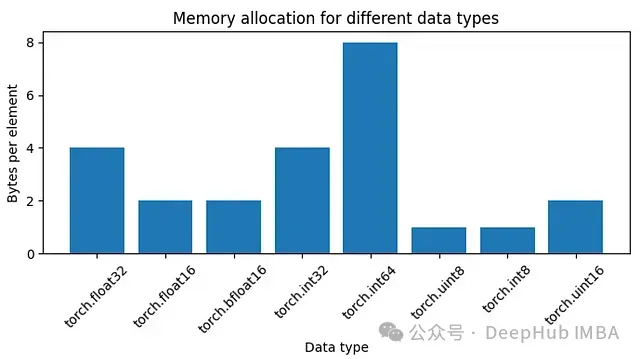

float32需要4字節的內存,bfloat16需要2字節,我們可以繪制一些數據類型所需的內存圖。

圖1:不同數據類型的內存分配

圖1:不同數據類型的內存分配

def test_dtype_memory_allocation():

dtypes = [torch.float32, torch.float16, torch.bfloat16, torch.int32, torch.int64, torch.uint8, torch.int8, torch.uint16]

memories = []

for dtype in dtypes:

base_memory = get_gpu_memory_used(device_id)

x = torch.ones((1024,), dtype=dtype, device=device)

memory_after_allocation = get_gpu_memory_used(device_id)

memories.append((memory_after_allocation - base_memory) // 1024)

del x

torch.cuda.empty_cache()

fig = plt.figure(figsize=(7, 4))

fig.set_tight_layout(True)

plt.bar([str(d) for d in dtypes], memories)

plt.xlabel("Data type")

plt.ylabel("Bytes per element")

plt.title("Memory allocation for different data types")

plt.xticks(rotation=45)

plt.show()內存塊

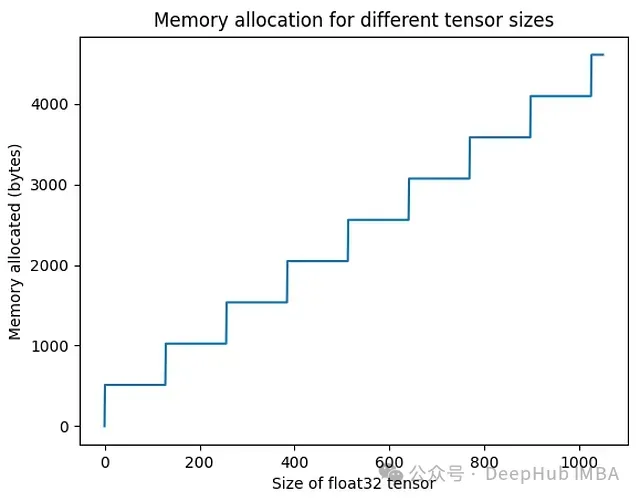

內存以512字節的塊分配。當創建一個張量時,它被分配到下一個可用的塊中。對于形狀為(800,)的float32張量,不是分配800 * 4 = 3200字節,而是分配3584(512 * 7)字節。

圖2:不同張量大小的內存分配。

圖2:不同張量大小的內存分配。

def test_memory_allocation_relationship():

"""

For different sizes of tensors, check the memory allocated on GPU.

"""

memories = []

sizes = 1050

for i in tqdm(range(sizes)):

base_memory = get_gpu_memory_used(device_id)

x = torch.randn((i,), dtype=torch.float32, device=device)

memory_after_allocation = get_gpu_memory_used(device_id)

memories.append(memory_after_allocation - base_memory)

del x

torch.cuda.empty_cache()

plt.plot(memories)

plt.xlabel("Size of float32 tensor")

plt.ylabel("Memory allocated (bytes)")

plt.title("Memory allocation for different tensor sizes")

plt.show()可訓練參數(單個線性層前向傳播)

接下來我們將看一個單一的線性層。進行前向傳播,并計算所需的內存。

def test_single_linear_layer_forward_allocation():

# Disable cublas

# import os; os.environ["CUBLAS_WORKSPACE_CONFIG"] = ":0:0"

print(f"Base memory: {torch.cuda.memory_allocated(device_id)}")

model = nn.Linear(256, 250, device=device, dtype=torch.float32)

print(f"Memory after model allocation: {torch.cuda.memory_allocated(device_id)}")

x = torch.randn((1, 256,), dtype=torch.float32, device=device)

print(f"Memory after input allocation: {torch.cuda.memory_allocated(device_id)}")

y = model(x)

final_memory = torch.cuda.memory_allocated(device_id)

print(f"Memory after forward pass: {final_memory}")

# Memory calculations

w_mem = len(model.weight.flatten()) * model.weight.dtype.itemsize

# Get the higher multiple of 512

w_mem_as_chunks = (w_mem + 511) // 512 * 512

print(f"{model.weight.shape=}, {w_mem=}, {w_mem_as_chunks=}")

b_mem = len(model.bias) * model.bias.dtype.itemsize

b_mem_as_chunks = (b_mem + 511) // 512 * 512

print(f"{model.bias.shape=}, {b_mem=}, {b_mem_as_chunks=}")

x_mem = (len(x.flatten()) * x.dtype.itemsize + 511) // 512 * 512

y_mem = (len(y.flatten()) * y.dtype.itemsize + 511) // 512 * 512

print(f"{x_mem=}, {y_mem=}")

total_memory_expected = w_mem_as_chunks + b_mem_as_chunks + x_mem + y_mem

cublas_workspace_size = 8519680

memory_with_cublas = total_memory_expected + cublas_workspace_size

print(f"{total_memory_expected=}, {memory_with_cublas=}")

assert final_memory == memory_with_cublas

del model, x, y

torch.cuda.empty_cache()

print(f"Memory after cleanup: {torch.cuda.memory_allocated(device_id)}")

torch._C._cuda_clearCublasWorkspaces()

print(f"Memory after clearing cublas workspace: {torch.cuda.memory_allocated(device_id)}")

"""

Output:

Base memory: 0

Memory after model allocation: 257024

Memory after input allocation: 258048

Memory after forward pass: 8778752

model.weight.shape=torch.Size([250, 256]), w_mem=256000, w_mem_as_chunks=256000

model.bias.shape=torch.Size([250]), b_mem=1000, b_mem_as_chunks=1024

x_mem=1024, y_mem=1024

total_memory_expected=259072, memory_with_cublas=8778752

Memory after cleanup: 8519680

Memory after clearing cublas workspace: 0

"""model有一個形狀為(256, 250)的float32 weight矩陣,占用(256 * 250 * 4) = 256,000字節,這正好是內存塊大小512的倍數(512 * 500 = 256,000)。但是bias有250個float32需要占用(250 * 4) = 1000字節。而512的更高倍數是2,(512 * 2) = 1024字節。x和y是形狀為(256,)的張量,所以它們各占用1024字節。總內存 = weight + bias + x + y

當我們將所有內容加起來時,應該得到259,072字節(256,000 + 1024 + 1024 + 1024)。但是實際觀察到的大小是8,778,752字節。這額外的8,519,680字節來自分配cuBLAS工作空間。

這是為快速矩陣乘法操作預留的內存空間。對于某些matmul操作,會分配一個新的8,519,680字節的塊。這個大小可能會根據GPU和Python環境而變化。當調用torch.cuda.empty_cache()時,cublas內存不會消失。它需要torch._C._cuda_clearCublasWorkspaces()來實際清除它。也可以設置環境變量os.environ["CUBLAS_WORKSPACE_CONFIG"] = ":0:0"來禁用cublas工作空間。但這可能是一種以犧牲執行速度為代價來優化內存的方法,所以我們使用默認就好。

梯度(單個線性層反向傳播)

使用相同的模型,運行loss.backward()。為簡單起見假設損失為loss = y.sum()。

def test_single_linear_layer_backward_allocation():

print(f"Base memory: {torch.cuda.memory_allocated(device_id)}")

model = nn.Linear(256, 250, device=device, dtype=torch.float32)

x = torch.randn((1, 256,), dtype=torch.float32, device=device)

y = model(x)

print(f"Memory after forward pass: {torch.cuda.memory_allocated(device_id)}")

y.sum().backward()

final_memory = torch.cuda.memory_allocated(device_id)

print(f"Memory after backward pass: {final_memory}")

# Memory calculations

next_chunk = lambda n: (n + 511) // 512 * 512

units = model.weight.dtype.itemsize # 4 bytes for float32

mem = next_chunk(len(model.weight.flatten()) * units)

mem += next_chunk(len(model.bias) * units)

print(f"Excepted model memory: {mem}")

x_mem = next_chunk(len(x.flatten()) * units)

y_mem = next_chunk(len(y.flatten()) * units)

print(f"{x_mem=}, {y_mem=}")

mem += x_mem + y_mem

# Gradient memory

w_grad_mem = next_chunk(len(model.weight.grad.flatten()) * units)

b_grad_mem = next_chunk(len(model.bias.grad.flatten()) * units)

print(f"{model.weight.grad.shape=}, {w_grad_mem=}")

print(f"{model.bias.grad.shape=}, {b_grad_mem=}")

mem += w_grad_mem + b_grad_mem

mem += 2 * 8519680 # cublas_size doubled

print(f"Total memory expected: {mem}")

assert final_memory == mem

del model, x, y

torch.cuda.empty_cache()

print(f"Memory after cleanup: {torch.cuda.memory_allocated(device_id)}")

torch._C._cuda_clearCublasWorkspaces()

print(f"Memory after clearing cublas workspace: {torch.cuda.memory_allocated(device_id)}")

"""

Output:

Base memory: 0

Memory after forward pass: 8778752

Memory after backward pass: 17555456

Excepted model memory: 257024

x_mem=1024, y_mem=1024

model.weight.grad.shape=torch.Size([250, 256]), w_grad_mem=256000

model.bias.grad.shape=torch.Size([250]), b_grad_mem=1024

Total memory expected: 17555456

Memory after cleanup: 17039360

Memory after clearing cublas workspace: 0

"""由于每個具有requires_grad=True的模型參數都會有一個.grad成員來存儲底層張量的梯度,所以模型的大小會翻倍。

這次分配了2個cublas工作空間內存塊,假設一個用于前向傳播,一個用于反向傳播。此時cublas何時確切地分配新塊還不確定。

中間張量(多層前饋網絡)

當模型在推理模式下運行時,沒有自動求導圖,不需要存儲中間張量。所以內存量只是簡單地將每一層的內存相加。

在需要跟蹤計算圖的訓練模式下情況會有所不同。當有多個串行應用的操作時,比如在前饋網絡或任何深度網絡中,自動求導圖需要記住這些操作的中間張量。存儲需求取決于它們的偏導數操作的性質。這些中間張量在反向傳播過程中從內存中清除。我們看一些例子:x是輸入,w是需要梯度的參數(w.requires_grad = True)。

- x @ w不需要額外的存儲。偏導數x已經存儲。但是當x是某個輸出,如x = u * w1時,x也需要被存儲。

- x + w也不需要存儲,因為對w的偏導數是0。

- (x * 2) @ w將需要存儲操作數x * 2,因為它將用于找到梯度。

- (((x + 2) @ w1) + 3) * w2是一個有趣的案例,模仿了2層。

- 對于關于w1的偏導數,我們需要存儲x + 2

- 對于關于w2的偏導數,我們需要存儲((x + 2) @ w1) + 3

讓我們看看更深網絡的實現:

def test_multi_layer_forward():

print(f"Base memory: {torch.cuda.memory_allocated(device_id)}")

inference_mode = False

n_layers = 1

model = nn.Sequential(*[

nn.Sequential(

nn.Linear(200, 100),

nn.ReLU(), # No trainable params

nn.Linear(100, 200),

nn.Sigmoid(), # No trainable params

)

for _ in range(n_layers)

]).to(device_id)

batch_size = 5

x = torch.randn((batch_size, 200), device=device_id)

with torch.inference_mode(inference_mode):

y = model(x)

final_memory = torch.cuda.memory_allocated(device_id)

print(f"Memory after forward pass: {final_memory}")

# Computed memory

next_chunk = lambda n: (n + 511) // 512 * 512

mem = 0

unit = model[0][0].weight.dtype.itemsize

for block in model:

for layer in block:

if isinstance(layer, nn.Linear):

mem += next_chunk(len(layer.weight.flatten()) * unit)

mem += next_chunk(len(layer.bias) * unit)

if not inference_mode:

# Gotta store the input

mem += next_chunk(layer.in_features * batch_size * unit)

mem += next_chunk(len(y.flatten()) * unit)

mem += 8519680 # cublas_size

if inference_mode:

mem += next_chunk(len(y.flatten()) * unit)

print(f"Total memory expected: {mem}")

assert final_memory == mem在像BatchNorm1d、LayerNorm、RMSNorm這樣的歸一化層中,在與參數w相乘之前,有一個對輸入x的操作,如(x — x.mean()) / (x.std() + 1e-6) * w。操作數(x — x.mean()) / (x.std() + 1e-6)是需要存儲的中間輸出。并且可能還有其他狀態,如running_mean、running_std或forward()方法中的中間張量需要考慮。其中一些中間張量我們無法訪問,所以我們無法確定發生了什么。當包含批量大小時,這變得更加復雜。

def test_layer_norm():

print(f"Base memory: {torch.cuda.memory_allocated(device_id)}")

x = torch.rand((10,), device=device_id)

w = torch.rand((10,), requires_grad=True, device=device_id)

# Layer Norm

y = (x - x.mean()) / (x.std() + 1e-6) * w

final_memory = torch.cuda.memory_allocated(device_id)

print(f"Memory after forward pass: {final_memory}")

# Memory calculations

next_chunk = lambda n: (n + 511) // 512 * 512

mem = next_chunk(len(x.flatten()) * x.dtype.itemsize)

mem += next_chunk(len(w.flatten()) * w.dtype.itemsize)

mem += next_chunk(len(y.flatten()) * y.dtype.itemsize)

mem += next_chunk(len(x.flatten()) * x.dtype.itemsize) # intermediate

print(f"Total memory expected: {mem}")

assert final_memory == mem反向傳播非常相似,但有一些變化:

- 模型大小因梯度存儲而翻倍。

- 所有中間張量在最后都被清除。

- 分配了一個新的cublas工作空間。

def test_multi_layer_backward():

print(f"Base memory: {torch.cuda.memory_allocated(device_id)}")

n_layers = 1

model = nn.Sequential(*[

nn.Sequential(

nn.Linear(200, 100),

nn.ReLU(), # No trainable params

nn.Linear(100, 200),

nn.Sigmoid(), # No trainable params

)

for _ in range(n_layers)

]).to(device_id)

batch_size = 5

x = torch.randn((batch_size, 200), device=device_id)

y = model(x)

print(f"Memory after forward pass: {torch.cuda.memory_allocated(device_id)}")

y.sum().backward()

final_memory = torch.cuda.memory_allocated(device_id)

print(f"Memory after backward pass: {final_memory}")

# Computed memory

next_chunk = lambda n: (n + 511) // 512 * 512

mem = 0

unit = model[0][0].weight.dtype.itemsize

for block in model:

for layer in block:

if isinstance(layer, nn.Linear):

mem += next_chunk(len(layer.weight.flatten()) * unit) * 2 # Weights and gradients

mem += next_chunk(len(layer.bias) * unit) * 2 # Biases and gradients

# mem += next_chunk(layer.in_features * batch_size * unit) # Intermediate tensors are cleared

mem += next_chunk(len(y.flatten()) * unit)

mem += 2 * 8519680 # cublas_size doubled

mem += next_chunk(len(y.flatten()) * unit)

print(f"Total memory expected: {mem}")

assert final_memory == mem優化器(單個線性層反向傳播)

我們觀察一些優化步驟的內存分配。

def test_single_linear_layer_with_optimizer():

# Disable cublas

import os; os.environ["CUBLAS_WORKSPACE_CONFIG"] = ":0:0"

memory_timeline_real = []

add = lambda e: memory_timeline_real.append({"event": e, "memory": torch.cuda.memory_allocated(device_id)})

add("baseline")

in_size = 256

out_size = 250

batch_size = 100

model = nn.Linear(in_size, out_size, device=device, dtype=torch.float32)

add("model_allocation")

optimizer = torch.optim.Adam(model.parameters(), lr=0.001)

add("optimizer_init")

x = torch.randn((batch_size, in_size,), dtype=torch.float32, device=device)

add("input_allocation")

def step(n):

optimizer.zero_grad()

add(f"optim_zero_grad_{n}")

y = model(x)

add(f"forward_{n}")

y.sum().backward()

add(f"backward_{n}")

optimizer.step()

del y

add(f"optim_step_{n}")

for i in range(4):

step(i + 1)

# Bar chart with even name on x-axis and total_memory on y-axis

fig = plt.figure(figsize=(15, 7))

fig.set_tight_layout(True)

plt.ylim((0, 1_300_000))

plt.bar([event["event"] for event in memory_timeline_real], [event["memory"] for event in memory_timeline_real])

plt.xlabel("Event")

plt.ylabel("Total memory allocated (bytes)")

plt.title(f"Memory allocation during training ({type(optimizer)})")

plt.xticks(rotation=45)

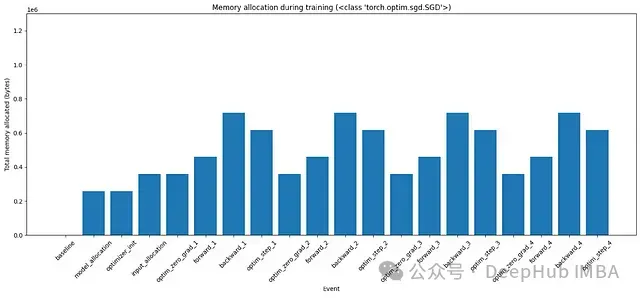

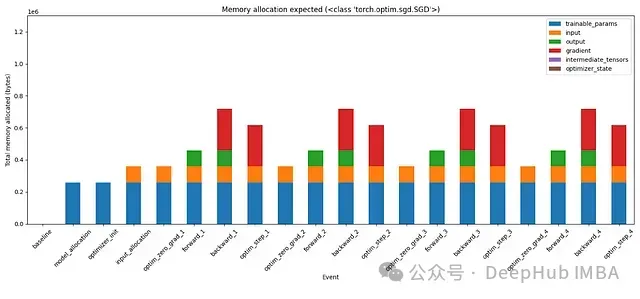

plt.show() 圖3:使用SGD優化器在訓練的各個階段的內存分配

圖3:使用SGD優化器在訓練的各個階段的內存分配

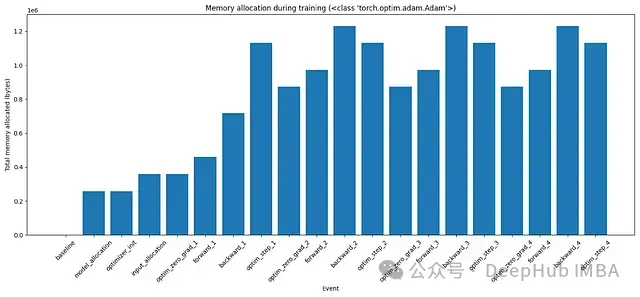

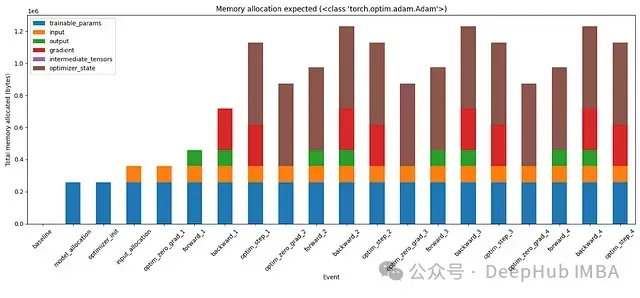

圖4:使用Adam優化器在訓練的各個階段的內存分配

圖4:使用Adam優化器在訓練的各個階段的內存分配

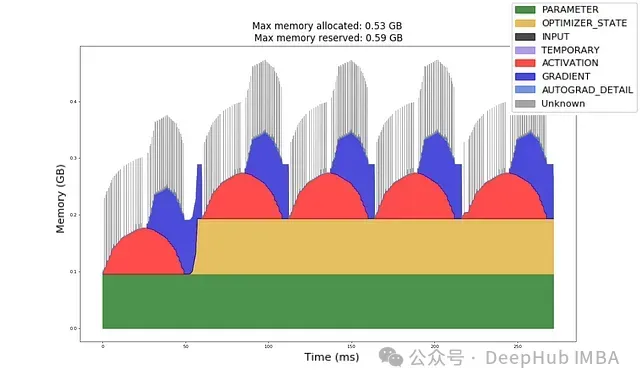

直到backward_1,我們看到內存分配如預期。當optimizer.step()結束時,在這個特定的代碼中刪除了y,所以該內存被釋放。在底層優化器會獲取額外的內存(等于可訓練參數的大小)來更新它們,并在更新后釋放該內存。這在圖中沒有顯示。更詳細的時間圖可以在下圖5中看到。

對于Adam對每個可訓練參數都有一階矩和二階矩。所以它總是在內存中保留2倍的模型大小。這是這段代碼中訓練最耗費內存的部分。

圖5:按毫秒計的內存分配時間圖。

圖5:按毫秒計的內存分配時間圖。

現在讓我們嘗試手動計算這些內存需求:

# Memory calculations (continuing from previous code block)

units = model.weight.dtype.itemsize

memory_timeline = []

all_keys = ["trainable_params", "input", "output", "gradient", "intermediate_tensors", "optimizer_state"]

def update_memory(event: str, update: dict):

prev_state = memory_timeline[-1] if memory_timeline else {k: 0 for k in all_keys}

new_state = {k: prev_state.get(k, 0) + update.get(k, 0) for k in all_keys}

new_state["event"] = event

memory_timeline.append(new_state)

next_chunk = lambda n: (n + 511) // 512 * 512

update_memory("baseline", {})

# Model memory

model_mem = next_chunk(len(model.weight.flatten()) * units)

model_mem += next_chunk(len(model.bias) * units)

update_memory("model_allocation", {"trainable_params": model_mem})

update_memory("optimizer_init", {})

# Input memory

x_mem = next_chunk(len(x.flatten()) * units)

update_memory("input_allocation", {"input": x_mem})

update_memory("optim_zero_grad_1", {})

# Forward

y_mem = next_chunk(batch_size * out_size * units)

# Add any intermediate tensors here.

update_memory("forward_1", {"output": y_mem}) # , "intermediate_tensors": ...})

# Backward

grad_mem = next_chunk(len(model.weight.grad.flatten()) * units)

grad_mem += next_chunk(len(model.bias.grad.flatten()) * units)

# Clear any intermediate tensors here.

update_memory("backward_1", {"gradient": grad_mem}) # "intermediate_tensors": ...})

# Optimizer memory

if isinstance(optimizer, torch.optim.SGD):

# SGD has parameters in memory. They are cleared after each step.

optimizer_mem = 0

elif isinstance(optimizer, torch.optim.Adam):

# Adam has parameters and 2 momentum buffers. Parameters are cleared after each step.

optimizer_mem = 2 * model_mem

else:

raise

update_memory("optim_step_1", {"optimizer_state": optimizer_mem, "output": -y_mem})

for step in range(2, 5):

update_memory(f"optim_zero_grad_{step}", {"gradient": -grad_mem})

update_memory(f"forward_{step}", {"output": y_mem})

update_memory(f"backward_{step}", {"gradient": grad_mem})

update_memory(f"optim_step_{step}", {"output": -y_mem})

# Make totals

for event in memory_timeline:

event["total"] = sum([v for v in event.values() if isinstance(v, int)])

# Plot memory timeline

import pandas as pd

df = pd.DataFrame(memory_timeline, columns=all_keys + ["event"])

df.set_index("event", inplace=True, drop=True)

df.plot(kind='bar', stacked=True, figsize=(15, 7), ylim=(0, 1_300_000), xlabel="Event", ylabel="Total memory allocated (bytes)", title=f"Memory allocation expected ({type(optimizer)})")

plt.tight_layout()

plt.xticks(rotation=45)

plt.show()

# Compare the two timelines

for i, (real, expected) in enumerate(zip(memory_timeline_real, memory_timeline)):

assert real["memory"] == expected["total"], f"Memory mismatch at {real['event']}: {real['memory']} != {expected['total']}" 圖6:使用SGD優化器在訓練的不同階段的內存使用分段

圖6:使用SGD優化器在訓練的不同階段的內存使用分段

圖7:使用Adam優化器在訓練的不同階段的內存使用分段

圖7:使用Adam優化器在訓練的不同階段的內存使用分段

在手動計算內存分配后,我們的計算與觀察結果相匹配。這次實際上可以看到內存分配到各種張量的分段。例如,Adam的狀態占用了兩倍的模型大小。梯度(紅色)的不同變化。如果向繼續測試,還可以嘗試向這個模型添加更多層,添加中間張量并在適當的時候刪除它們。這應該在這些條形圖中創建另一個代表中間張量的分段。

總結

結合上面的每個概念我們可以回答主要問題:

- 可訓練參數:固定的模型大小

- 內存塊:它只以512字節的塊出現

- Cublas內存:前向傳播一個塊,反向傳播一個塊

- 梯度:與模型大小相同

- 中間張量:最麻煩的部分,取決于代碼如何編寫

- 優化器:至少分配一倍的模型大小

最后一個問題就是,我們只處理了前饋層,那么CNN、Transformers、RNN等呢?首先CNN是類似前饋層的操作,所以我們可以根據他的計算規則進行計算,而Transformers、RNN都基礎操作的組合,我們計算了一個前饋層可以根據他們的架構進行組合計算。我們已經掌握了計算前饋層內存需求的方法,所以我們可以自己解決這些問題!